When specifically tailored queries made to test Apple Intelligence using developer tools are intentionally ambiguous about race and gender, researchers have seen biases pop up.

AI Forensics, a German nonprofit, analyzed over 10,000 notification summaries created by Apple’s AI feature. The report suggests that Apple Intelligence treats White people as the “default” while applying gender stereotypes when no gender has been specified.

According to the report, Apple Intelligence has a tendency to ignore a person’s ethnicity if they are Caucasian. Conversely, any messages that mentioned another ethnicity regularly saw the notification summary follow suit.

The report found that when working with identical messages, Apple’s AI model only mentioned a person’s ethnicity as being white 53% of the time. But those figures were considerably higher for other ethnicities; their ethnicity was mentioned 89% of the time when they were Asian, 86% when they were Hispanic, and 64% when they were Black.

The research claims that Apple Intelligence assumes that the person mentioned in the messages is white the majority of the time. Effectively, the model believes that white is the norm.

Another example shows Apple Intelligence assigning gender roles when none were given.

The tests used a sentence that mentioned both a doctor and a nurse, stopping short of getting into specifics. However, Apple Intelligence created associations that weren’t in the original message in 77% of the summaries tested.

Further, 67% of those instances saw Apple Intelligence assume that the doctor was a man. It then went on to make a similar assumption that the nurse was a woman.

Notably, it’s believed that the AI’s training data led to the assumptions. They closely align with U.S. workforce demographics, suggesting that the AI is simply working with the information it was trained on.

Similar biases were observed across a variety of different criteria. The report shows that eight social dimensions, including age, disability, nationality, religion, and sexual orientation, were all subject to the AI’s assumptions.

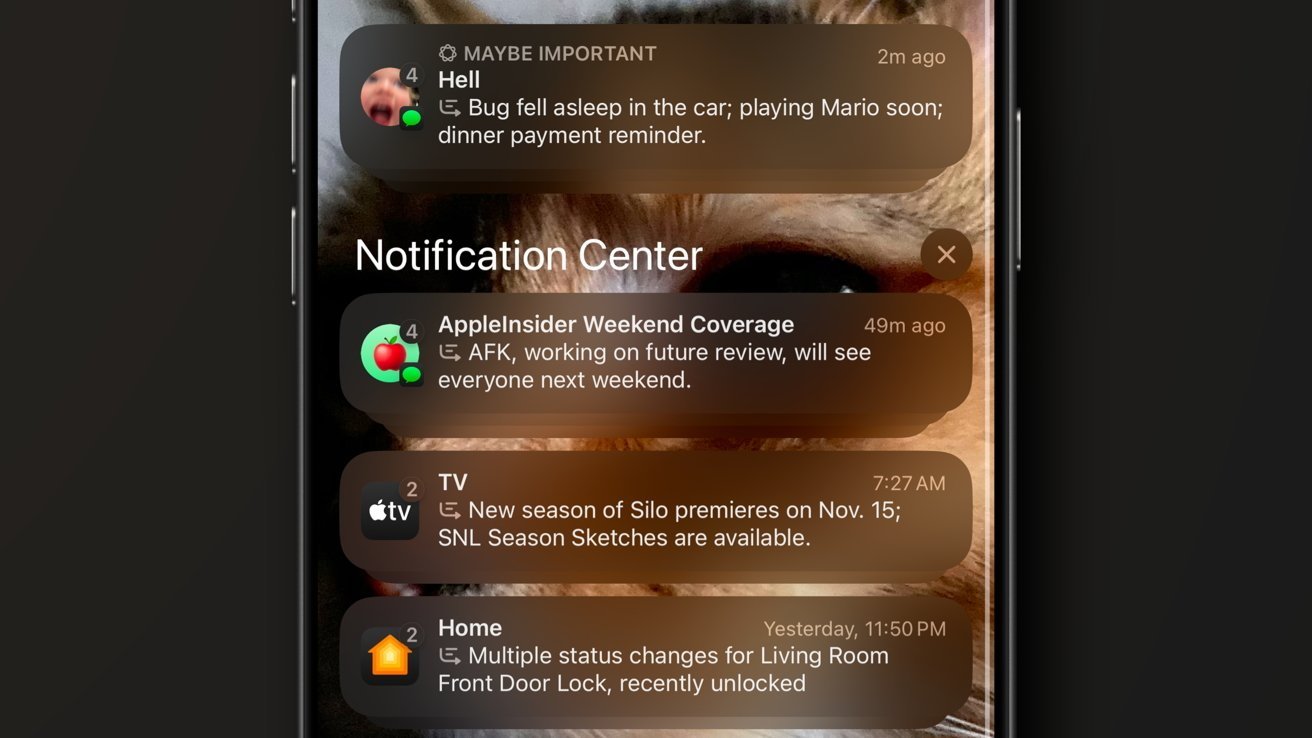

In a report detailing its work, AI Forensics explains that it used a custom application made using Apple’s developer tools to run its tests. That application hooked into Apple’s Foundation Models framework to simulate real-world messages.

That approach means that the testing closely matches what users of other third-party messaging apps might experience. However, there is still some considerable room for inaccuracy.

AI Forensics admits that its “test scenarios are synthetic constructions designed to probe specific bias dimensions, not naturalistic notifications.” It adds that real messages may differ in the way that they are written and, as a result, interpreted by Apple Intelligence.

The outfit also notes that real-world messages may not use the same “ambiguous pronoun references” as its test messages. This, we think, is the biggest flaw in the research.

However, it’s important to note that any biases, like the ones shown in this report, can be huge at Apple’s scale. Apple Intelligence is used on hundreds of millions of devices every day.

Similar results to those highlighted in this report may well occur in considerable numbers.

This isn’t the first time that Apple’s AI-powered notification summaries have come under fire. In December 2026, the BBC complained that summaries of its news articles were wrong.

One example notification read “Luigi Mangione shoots himself,” referring to the man arrested for the murder of UnitedHealthcare CEO Brian Thompson. Mangione was, and is, alive and currently awaiting trial.

Apple subsequently disabled notification summaries for news apps while it worked on fixing the issue. But this report shows that notifications for communication apps, like Messages, continue to prove problematic.

Apple is clearly aware of Apple Intelligence’s shortcomings. The company recently signed a deal with Google to bring its Gemini AI model to Siri.

But following reports that the revamped Siri will not ship with iOS 26.4 as expected, hopes of an imminent improvement have been dashed.

Interestingly, AI Forensics also notes that Google’s Gemma3-1B model is much smaller than Apple’s, yet more accurate. In testing, it hallucinated less frequently as well as less stereotypically.

Apple recently placed software chief Craig Federighi in charge of its AI efforts, a sign that it isn’t happy with Apple Intelligence as-is. But improvements are slow to come.

Hope of a quick fix for the kinds of biases highlighted by AI Forensics is likely to be dashed much more quickly.

The biases found in Apple’s AI systems are not isolated incidents but rather the result of deep-seated issues embedded within the technology’s infrastructure. The first major contributor to these biases is the training data utilized to develop these AI models. When these datasets reflect historical and societal prejudices, the AI reproduces these biases in its outputs. It’s not a failure of technology itself, but a mirror reflecting the inequalities present within the data it consumes.

Alongside this, the design of the algorithms plays a key role. These algorithms might emphasize specific types of information, leading them to unconsciously prioritize certain traits or demographics. For example, if an algorithm is more attuned to dominant narratives found in its data, it might inadvertently minimize or misrepresent minority groups or perspectives. This kind of skewed output stems from a lack of balanced representation within the algorithm’s core processes.

Moreover, the composition of the teams behind these technologies significantly impacts their development. A development team lacking in diversity may not fully perceive or address the nuances of bias issues. Diverse teams can offer a wider range of insights and perspectives, which are crucial in identifying and mitigating potential biases early in the development stages. Without these varied viewpoints, there’s a risk that products will not adequately cater to or represent the global populace.

These underlying causes highlight the importance of a well-rounded approach to AI development, one that not only integrates a breadth of diverse data into training but also consciously constructs algorithms with fairness in mind. Furthermore, shifting the makeup of development teams to represent the demographics served by their products is essential. These steps are fundamental to crafting AI systems that not only work efficiently but also equitably, serving the diverse range of individuals that interact with them every single day.

Addressing the biases inherent in AI requires concerted efforts across multiple fronts, with a vision that goes beyond mere technology fixes. The pathway to mitigating AI bias involves integrating a broad spectrum of voices, refining technological methods, and fostering continuous improvement through feedback and innovation.

A critical step forward is enhancing the diversity of training data. By ensuring that datasets include a wide array of perspectives reflective of global demographics, AI systems can become more inclusive and less prone to perpetuate existing stereotypes. This approach requires active curation and expansion of data sources to break the cycle of historical bias replication.

Innovation in algorithm design is another corner where significant progress can be made. Designing algorithms that are not only transparent but also accountable can alleviate bias. Transparency in AI means that these models would open themselves up to scrutiny, allowing third parties to oversee and suggest modifications to improve fairness. Coupled with fairness audits and impact assessments, this transparency can serve as a foundation for trust in AI systems.

An integral aspect of reducing bias is cultivating inclusive development environments. Diversity within AI development teams can dramatically enhance the awareness and sensitivity towards potential biases. Encouraging various cultural, gender, and experiential perspectives allows for a more comprehensive understanding of the multifaceted issues surrounding AI usage in diverse communities. By embedding these insights into the development phase, biases can be detected and mitigated early.

Looking to the future, continuous monitoring and feedback mechanisms stand as pillars for sustainable AI systems. By implementing real-time evaluations and integrating user feedback loops, AI technologies can adapt and refine their outputs to align more closely with ever-evolving societal norms and expectations. Through partnerships with research institutions and advocacy groups, tech companies can stay ahead in understanding bias dynamics and addressing them proactively.

Ultimately, the journey towards equitable AI requires persistence, collaboration, and an unwavering commitment to ethical principles. By embracing these practices, and continually adapting to the feedback and changing landscape, companies like Apple can spearhead the development of AI systems that reflect the diversity and complexity of the world they operate within. This vision, though ambitious, is entirely within reach if the will and resources are directed towards these transformative actions. For related coverage, see our Managing notifications effectively with iOS 16.